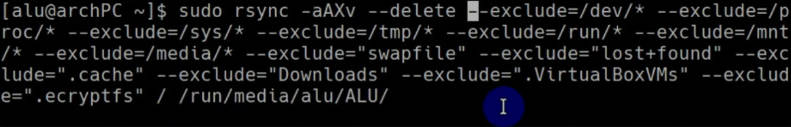

While anyone is absolutely free to use or modify it for themselves, I am making absolutely no guarantees or warranties. Of course, it's a lot slower on the first run-through.īy the way, this script was written by me for my use on my personal server at home. With no changes to commit (second run-through, for example) it takes about 5-7 minutes to scan 1.5 TB of files. Mount -o remount,ro /mirror 2>&1 | unix2dos 2> $logfileĮcho `date`" /bin/mirror_backup completed successfully" | unix2dos > $logfile Mkdir -p $ 2>&1 | unix2dos > $logfileĮcho "Report generated on "`date` | unix2dos > $reportĮcho "RAID drive status:" | unix2dos > $reportĮcho "Disk usage per slice:" | unix2dos > $reportĮcho "Disk Usage per User:" | unix2dos > $reportĭu -h -max-depth 1 /home | unix2dos > $reportĮcho "Disk Usage on Share drive:" | unix2dos > $reportĭu -h -max-depth 1 /home/share | unix2dos > $reportĮcho "Filesystem Usage Overview:" | unix2dos > $reportĭu -h -max-depth 1 / | unix2dos > $reportĮcho "Report Complete" | unix2dos > $report Mount -o remount,rw /mirror 2>&1 | unix2dos > $logfileĮcho `date`" Mount failed, aborting /bin/mirror_backup." 1>&2Įcho `date`" Mount failed, aborting /bin/mirror_backup." | unix2dos > $logfile This amazing utility identifies and transfers only the differences between files it currently allows us to take a backup of around 3GB of data (residing inside.

Hope I didn't cause problems with the edits) #! /bin/bashĮcho `date`" /bin/mirror_backup started" | unix2dos > $logfileĮcho "mirror_backup Automatically archive a list of"Įcho " directories to a storage location" Here's the script I use: (Edited somewhat for clarity. I'm not sure how that function works, but it shouldn't be hard. Everything backed up fine so I went ahead, set up RAID, and then did a fresh install of Ubuntu Server. It only updates the files which have changed, (modified, created, or deleted,) but also has the capability to preserve old files. With an appropriate script, it seems to be the ideal solution for you. We never tried big commercial solutions, except R1Soft CDP since it was provided as an optional service by the datacented hosting our servers. That is already more than 2 months that the engineers of R1Soft and datacenter are playing a hot ball game.

We tried R1Soft CDP backup, which is block level backup, and it proved good and efficient for all our other servers, but systematically fails on the server with 3 terabytes and gazillions of files. But i guess, except R1Soft CDP, there is no way around that. Moreover these are all file based backup, which put a lot of IOwait just to browse directories and query stat(). Basic unix tools are not enough, rsync does not keep history, rdiff-backup miserably fails from time to time and screw the history. Note that the files are all different and almost never modified, and usage is mostly adding files, so data volume is today 3TB growing all the time at around +15GB/day. Which backup tool or solution would you use to backup terabytes and lots of files on a production linux server ?

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed